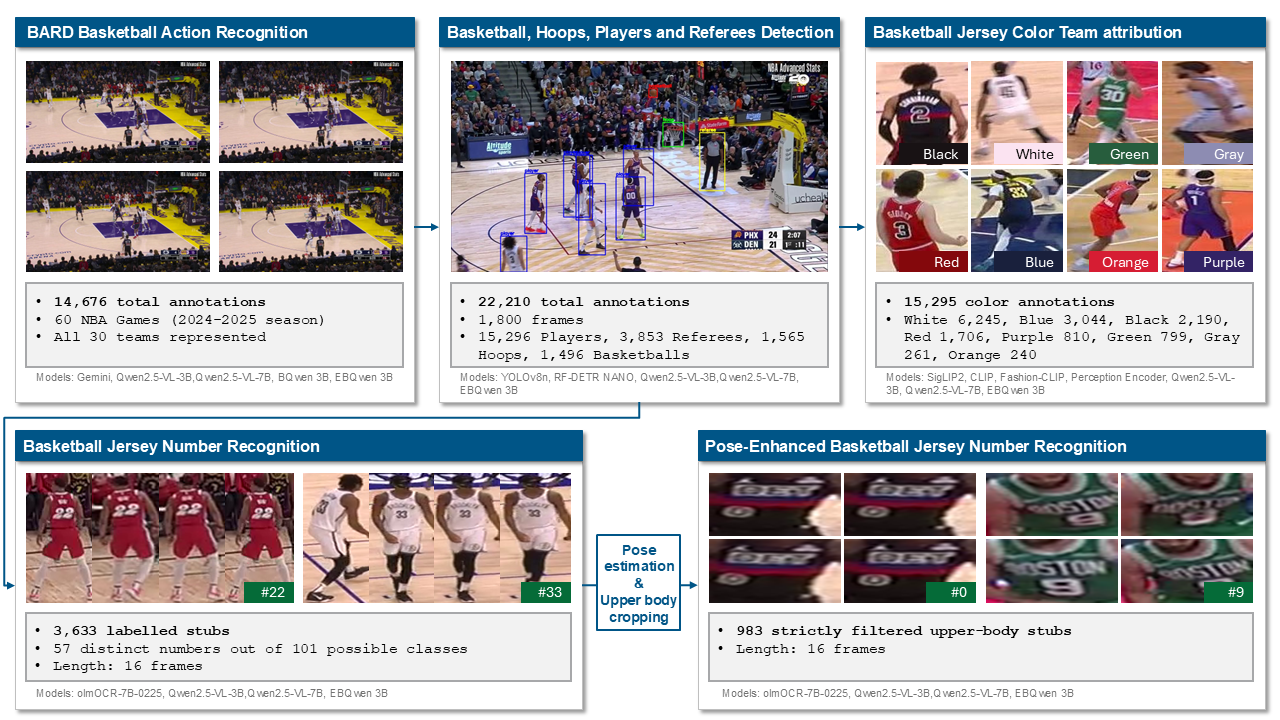

This work builds upon the Basketball Action Recognition Dataset (BARD), originally introduced to enable supervised learning for primary action recognition in NBA game footage. However, BARD’s initial design lacks the granular annotations required to develop multi-stage computer vision pipelines involving object detection, jersey number recognition (JNR) and team attribution. To address these limitations, we present E-BARD (Extended Basketball Action Recognition Dataset), which bridges the gap between isolated action recognition and end-to-end scene-level reasoning through three key contributions.

First, we introduce a new set of interrelated datasets that augment the original BARD videos with dense visual annotations. This includes detection data for key entities (ball, hoop, referee, player), team attribution based on uniform colors and JNR, all integrated to directly support and enrich the original action captions. Second, we establish a comprehensive benchmark for these specific visual understanding tasks using representative state-of-the-art models. We evaluate YOLO and RF-DETR for object detection; CLIP, SigLIP2, FashionCLIP, and the Perception Encoder for team color attribution; and olmOCR, Qwen2.5-VL-3B, and Qwen2.5-VL-7B for JNR. Finally, we propose a holistic, integrated approach based on Qwen2.5-VL, demonstrating the capacity of a unified multimodal framework to jointly address all subtasks simultaneously. Ultimately, E-BARD provides a comprehensive benchmark for multi-task basketball video understanding.

We have open-sourced the code for downloading the data and reproducing the results presented in our paper.

If you use BARD in your work, please cite the associated paper.